Working in mixed reality with room import and spatial anchors

Hilmar Gunnarsson October 11, 2022

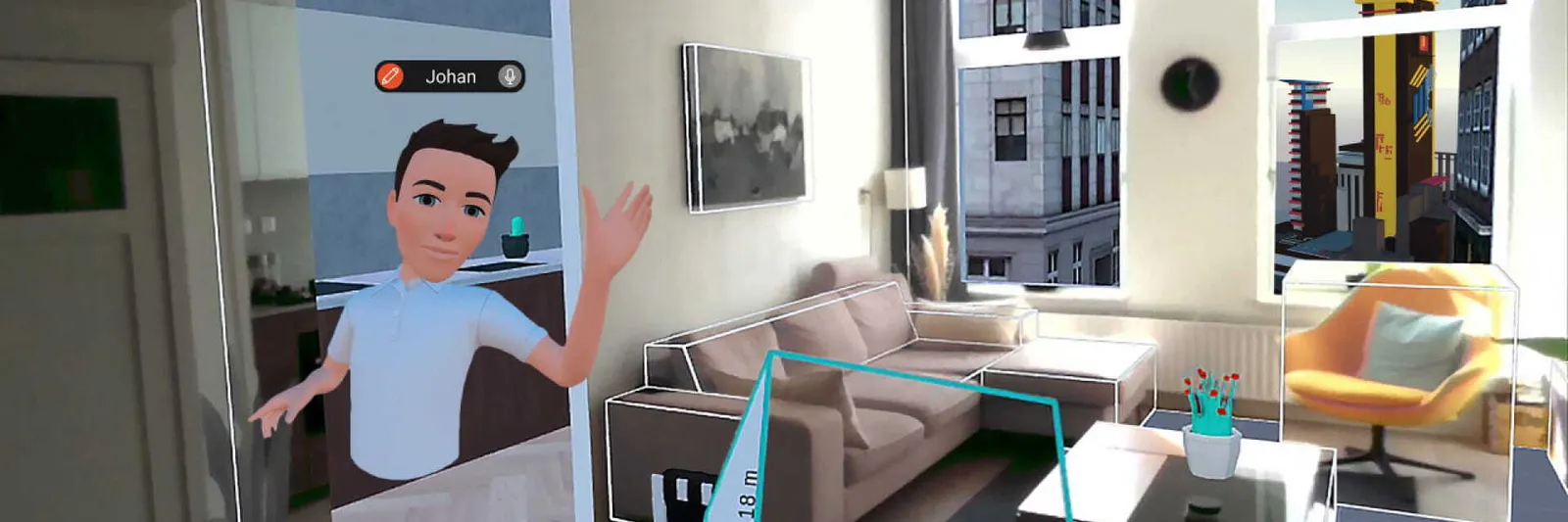

Hilmar Gunnarsson October 11, 2022 Mixed reality design in Arkio is now even more powerful on Meta Quest & Meta Quest Pro!

Please note: Room import and spatial anchors require Quest OS v46, or developer mode to be turned on if your device is on an earlier version.

From the very beginning our objective at Arkio has been to enable users to design in mixed reality. Being able to sketch new design ideas on top of the real world and then walk around seeing those designs is nothing short of magical.

Simplifying mixed reality design

While we first introduced passthrough modeling in version 1.2 of Arkio, version 1.3 takes this experience to a whole new level using various new technologies and UX innovations. Our goals with this update are to simplify the mixed reality workflow and to allow users to dive into it with as little setup as possible. The mixed reality features introduced with this update include:

- Support for spatial anchors using Meta Presence Platform

- Room import from device using Meta Presence Platform

- Locking user position at human scale and locking Arkio worktable at larger scales

- Constrained adjustments for aligning the virtual and real world when needed

- Movement and visualization of locked world positions

Spatial anchors

When designing in mixed reality it’s vital to keep the virtual and physical worlds aligned for virtual objects, walls and furniture objects to retain their correct position. With this update, we’re adding support for spatial anchors using the Meta Presence Platform. Using spatial anchors, Arkio locks a virtual scene in place as soon as a user activates passthrough modeling, for example by toggling on the passthrough bracelet button and placing a virtual object in a room. These anchors are persisted on the device and in each scene that is locked to the physical space. This means that whenever the scene is viewed in that same physical space the virtual objects will automatically be aligned to their prior locations in the space.

Room import

Using the Scene API from Meta's Presence Platform Arkio is now able to import a physical room to allow users to instantly start mixing and modifying it. All that's required is to outline a room using Room Setup on Meta Quest or Meta Quest Pro, then import it into Arkio.

When a room is imported, Arkio automatically creates a new scene, locks the room into position using spatial anchors and teleports the user into the room at true scale. Using the data from Scene API it creates a floor, ceiling and walls using Arkio geometry that users can modify at will. All these elements come painted with Arkio's special passthrough material so users see their physical environment through the element surfaces. Users can then do modifications such as applying different materials to walls, adding virtual objects to the room or creating a virtual window looking into the virtual scene beyond.

Locking at different scales

When working in Arkio users continually transition between different scales. One minute a user is working on a design at large scale with the Arkio worktable hovering in front and the next she teleports down to human scale to experience her design as if she’s really there. Changing scales in Arkio is easy using either the teleport function or the grip buttons. Working at human scale and working at larger scales represent somewhat different use cases, something we had to consider when designing the new UX for mixed reality design.

At larger scales it’s possible to lock the Arkio worktable in a physical position in the environment. By toggling on the passthrough button the user can pan and rotate the table around or scale it to the right size, then select the lock button on the bracelet to lock the table in place. The table's position can always be repositioned by toggling the lock button off or by aggressively panning, rotating or scaling using the grip buttons or hand gestures.

The same principle applies at human scale, although at human scale it’s even more important to retain the right alignment between the virtual scene and the real world. Arkio auto-locks the user into position in certain cases when working at human scale, including:

- If the user imports a new room into Arkio from the device

- If the user has passthrough enabled on the bracelet and creates geometry on top of the real world

- If the user creates or paints geometry using the passthrough material

In these cases, the user expects the virtual and real worlds to remain aligned when moving around since parts of the real world are visible. Arkio also auto-aligns to a locked position when the Meta Quest wakes up or when the user walks between guardians.

Adjusting alignment

Sometimes it’s necessary to make adjustments to better align the virtual scene and real world or to create a completely new alignment. However, when a user is in a locked position, the grip/hand gestures to pan, scale and rotate the scene no longer work as otherwise the virtual scene and real world would fall out of alignment.

There are two ways to adjust alignment when in a locked position. The first is to toggle the lock button off on the secondary hand bracelet, make adjustments by panning or rotating the scene (or scaling if working at larger scales) and then toggling the lock button again. Sometimes, however, it's convenient to slightly adjust the horizontal position of the scene while preserving the current rotation and vertical position. By aggressively panning only in horizontal directions the user can unlock horizontal movement while vertical panning, rotation and scaling remain locked. These latter can be unlocked in the same manner using aggressive movements.

Movement and visualization of locked position

When designing in mixed reality it’s important to be able to move around the virtual scene while remaining stationary in the real world. A user might need, for example, to scale the scene to reach distant geometry or to zoom towards some detail. This necessarily takes the user out of alignment.

A user in a locked position can teleport away from the position to exit the lock. As soon as a user exits a locked position, Arkio shows a location marker in the virtual scene indicating where the user is located in reality, even if the user has moved virtually. The user can snap back to the locked position simply by teleporting to the location marker, thus re-aligning the real and virtual worlds.

What’s next

The promise of Arkio is to empower anyone to design, mix and share realities and we feel that this update is a big step forward in that direction. As can be seen from the above there are many important considerations when enabling people to design in mixed reality and seamlessly blend the real and virtual worlds. With version 1.3 we’ve taken mixed reality design in Arkio to the next level but it's still centered on single-user experiences. Next on our list is to enable people to collaborate in mixed reality when some or all of them are in the same physical location.